Introduction

I have already talked about HCI Mesh (dHCI) when it was first launched with vSAN 7.0 U1 here, I would encourage you to read thru my previous blog for better understanding of this feature and its capabilities.

This feature was originally announced with vSAN OSA for 7.0 U1 with standard vSAN clusters where a server cluster could share its vSAN Datastore with 5 Client vSAN cluster and a Client Cluster could mount upto 5 remote vSAN Datastore.Compute-Only cluster was not supported in this initial release.

Over a period of time with newer releases of vSAN until vSAN 8 we have had several new supported features

- Mounting remote datastores supports one-way and two-way relationships

- Addressed multiple needs through access to hardware performance, capacity, cluster services, resilience via storage policy based management thereby providing interoperability between all types of standard OSA cluster with different datastore services. An administrator will be able to define the types of data service they are interested in which allows them to pick desired type of storage when deploying VMs.

- It provided Scalability upto 128 hosts connected to remote vSAN datastore (hosts from server and client cluster included)

- Client cluster could connect upto 5 remote datastores

- Server cluster could serve upto 10 Client clusters.

- No vSAN license needed for HCI Mesh compute clusters, they can mount upto 5 remote vSAN Datastores

- Additional guided workflow and health checks for HCI Mesh clusters

- Easily mount or unmount remote vSAN datastore with assisted workflow and guardrails.

HCI Mesh (dHCI)-Limits for vSAN until 8.0 GA

- vCenter Server license should be Enterprise or Enterprise plus.

- Client cluster: Number of remote vSAN datastores that it can connect to (was 5 in vSAN 7 U1 and remains this way for U2 & U3)

- Server cluster: Number of client clusters that a server cluster can serve (was 5 in vSAN 7 U1 and remains this way for U2 & U3. Improved to 10 in vSAN 8)

- Datastore host count totals. Number of hosts connecting to a vSAN datastore when counting hosts participating in client clusters, and server cluster (was 64 hosts in vSAN 7 U1 and is now 128 hosts for U2 & U3)

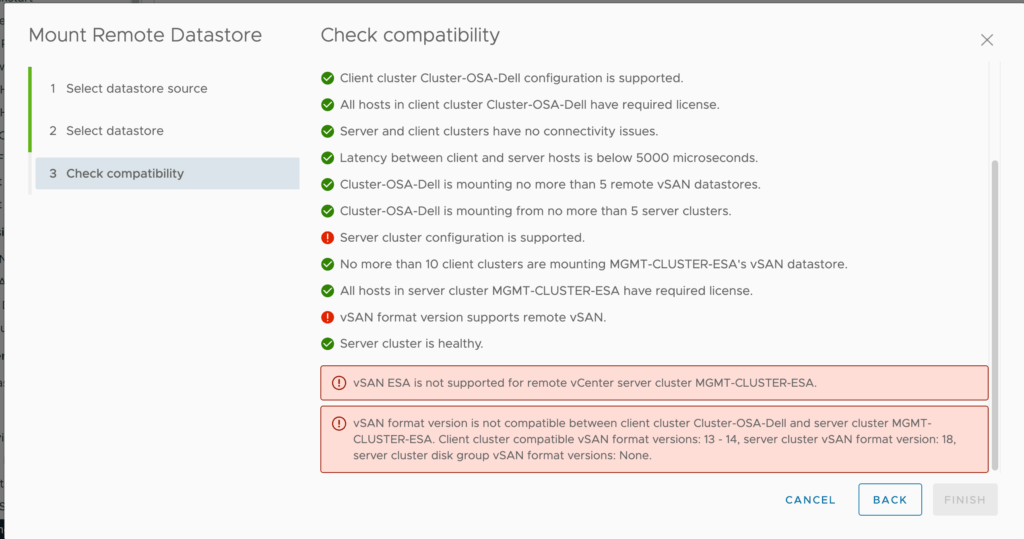

- HCI Mesh is only supported in vSAN 8 when using the Original Storage Architecture (OSA). In vSAN 8, HCI Mesh is not supported when using the Express Storage Architecture (ESA).

- Latency requirement between Server and Client clusters remains 5ms, VMware recommends to have latency less than 1ms for optimal performance.

- Several performance enhancements were made to vSAN sub-systems to ensure proper balance of object ownership and CPU usage on server cluster.

- vSAN VMKernel adapter used for inter host communication within the server cluster (local datastore) must be same for Client cluster vSAN communication, we cannot have separate vmkernel adapters like what we have for stretched cluster witness node traffic seperation.

- Clusters running vSAN over RDMA is currently unsupported for HCI Mesh

- vCLS VMs spawned by EAM service on vCenter server required to keep vSphere HA services functional and it is not supported to run these on remote vSAN Datastore for a given client cluster. See https://kb.vmware.com/s/article/80877 for more details.

Whats new in vSAN 8 U1 for HCI Mesh (Disaggregated HCI – dHCI)

The formally known HCI Mesh name is slowly phased out and the term dHCI (Disaggregated HCI) is emphasized by VMware starting with vSAN 8 U1 the core functional features and concepts remains same as HCI Mesh which is now called with term disaggregated HCI.

Storage Disaggregation (dHCI) with the Express Storage Architecture

In vSAN 8 U1, disaggregation of storage (dHCI)is now compatible with the Express Storage Architecture. Users will be able to mount remote vSAN datastores living in other vSAN clusters, and also use an ESA cluster as the external storage resource for a vSphere cluster. Support of disaggregation when using the ESA maintains the interoperability and ease of use our customers have grown to appreciate with disaggregation in vSAN’s Original Storage Architecture.

There are still some limitations on how dHCI is supported for ESA and OSA below Table should clarify this.

| Architecture | Server Cluster | Client Cluster | Supported |

| ESA | Standard vSAN Cluster | Standard vSAN Cluster | YES |

| ESA | Stretched vSAN Cluster | Standard vSAN Cluster | NO |

| ESA | Standard vSAN Cluster | Stretched vSAN Cluster | NO |

| ESA | Stretched vSAN Cluster | Stretched vSAN Cluster | NO |

| OSA | Standard vSAN Cluster | Standard vSAN Cluster | YES |

| OSA | Standard vSAN Cluster | Stretched vSAN Cluster | NO |

| OSA | Stretched vSAN Cluster | Standard vSAN Cluster | YES |

| OSA | Stretched vSAN Cluster | Stretched vSAN Cluster | YES |

| ESA/OSA | Stretched vSAN Cluster | Compute-Only Cluster | Yes** |

**Compute-Only clusters can only mount vSAN Datastores of the same architecture exclusively, which means we cannot mount a mix of OSA and ESA datastore to compute-only clusters. A client cluster that is a compute-only cluster that is stretched over two sites. This exclusivity rule still applies to non compute-only clusters as well meaning an OSA client/server cluster can be mounted to one or more OSA clusters and viceversa for ESA clusters.

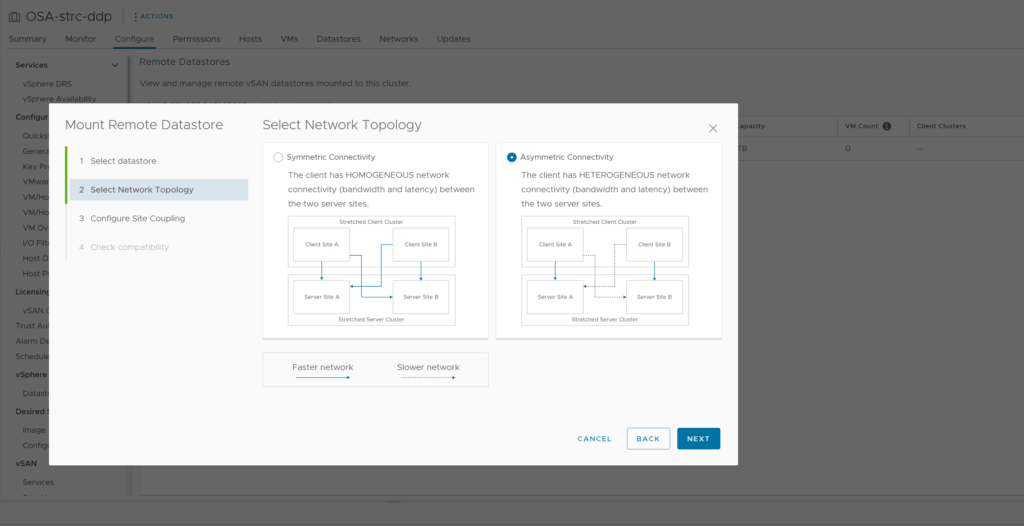

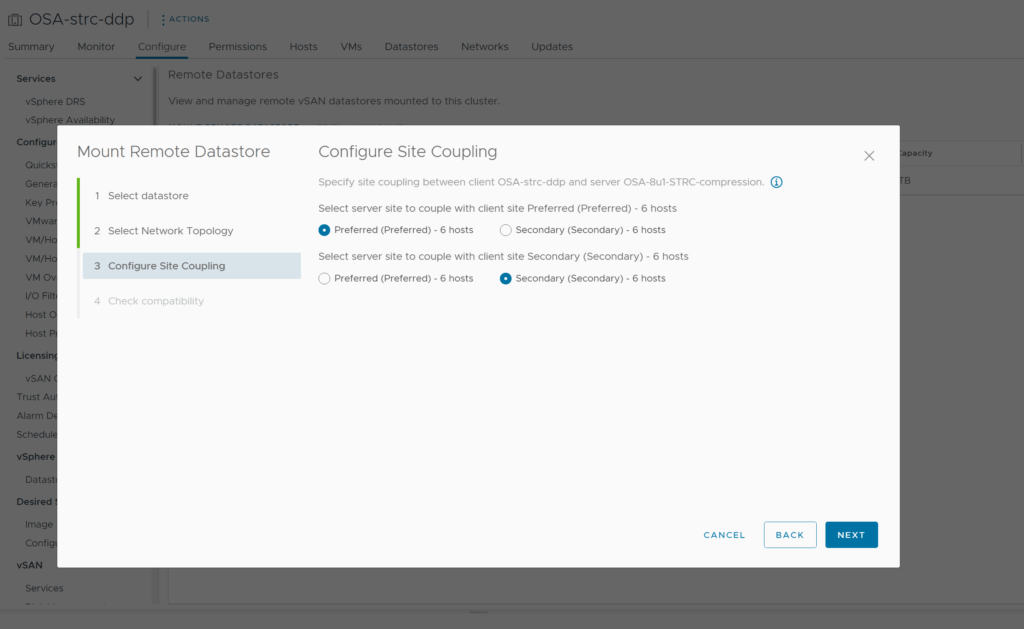

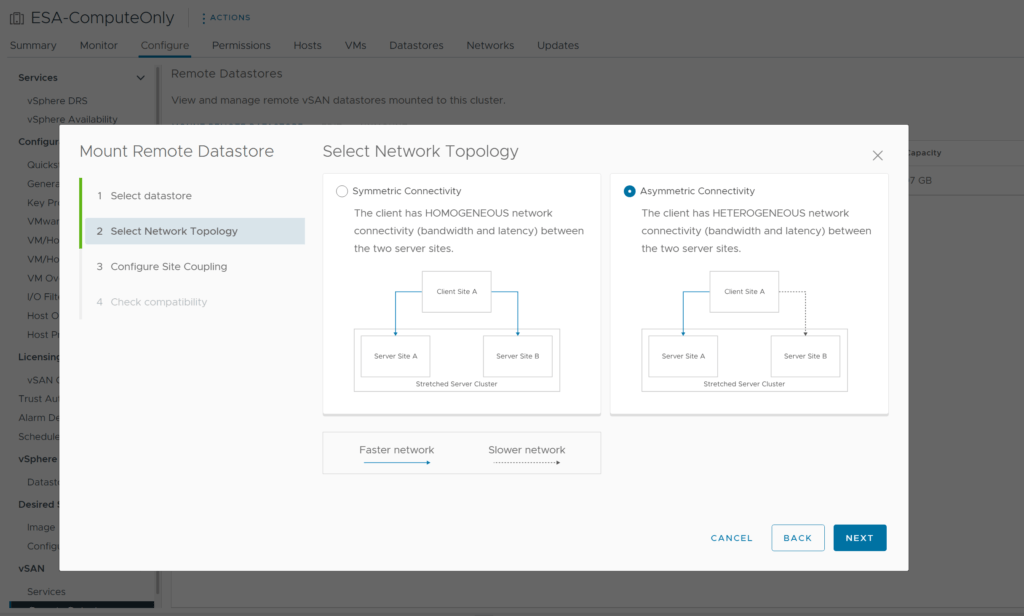

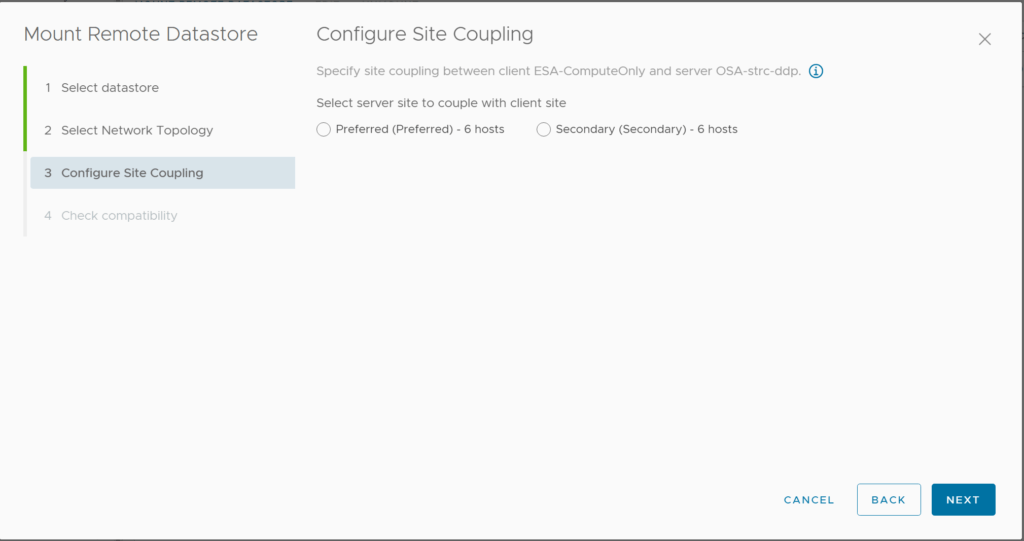

You will also be presented with a choice to choose the right network topology when presenting a remote datastore as a dHCI solution to a client cluster which is also a stretched cluster, lets assume that you have a “high bandwidth low latency” connection between the hosts in preffered site on server cluster and hosts in preferred site on Client cluster which means that both preferred site of two different cluster are on the same site , similar to this a secondary site on client cluster has a “high bandwidth low latency” connection to hosts in secondary site on server cluster. Then you must ensure that network topology is chosen accurately as “Asymmetric Connectivity” at the time of remote datastore mount operation, see below screenshots. If you have high bandwidth low latency connections between all sites, then you will choose “Symmetric Connectivity“

Similar to above steps when presenting a remote datastore from the server cluster which is a stretched cluster to a standard vSAN cluster or a compute only cluster , you will be asked to pick the correct topology to make sure that all VMs created on the compute-only cluster or a standard vSAN cluster will get the least latency from remote vSAN datastore.

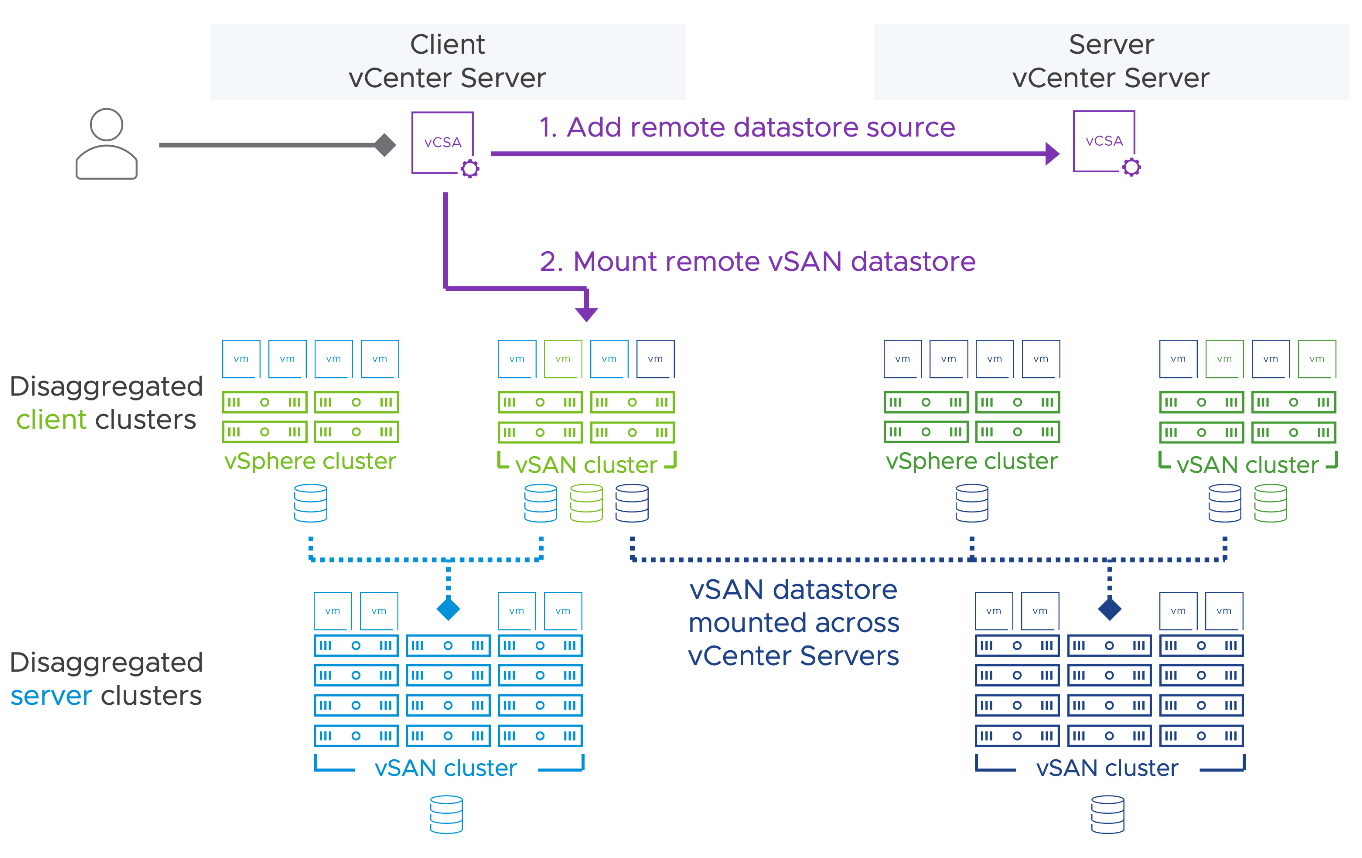

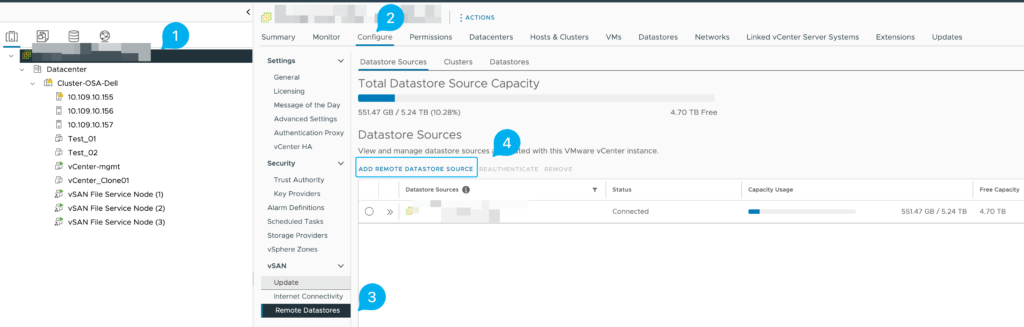

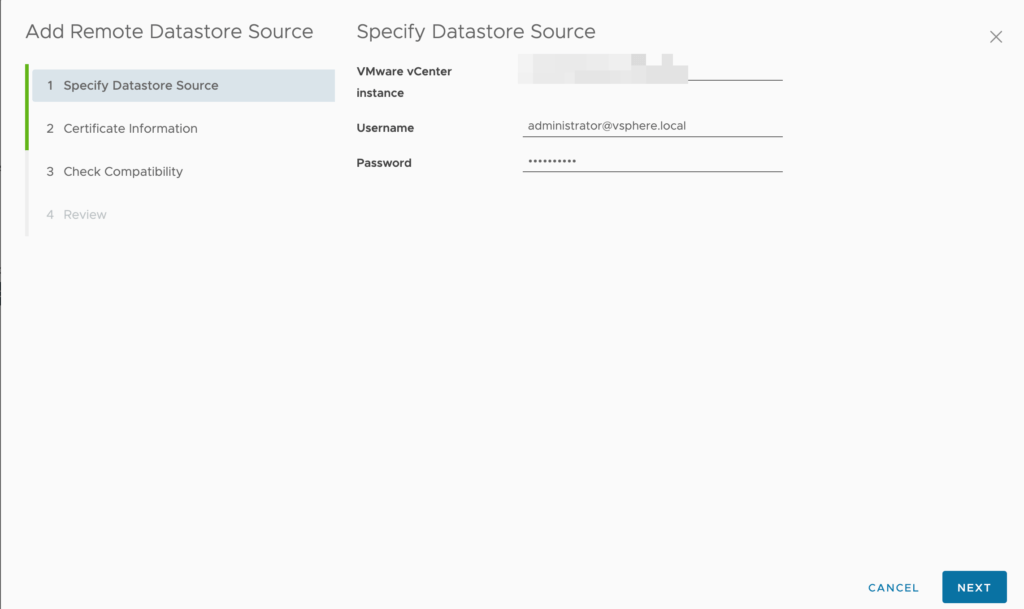

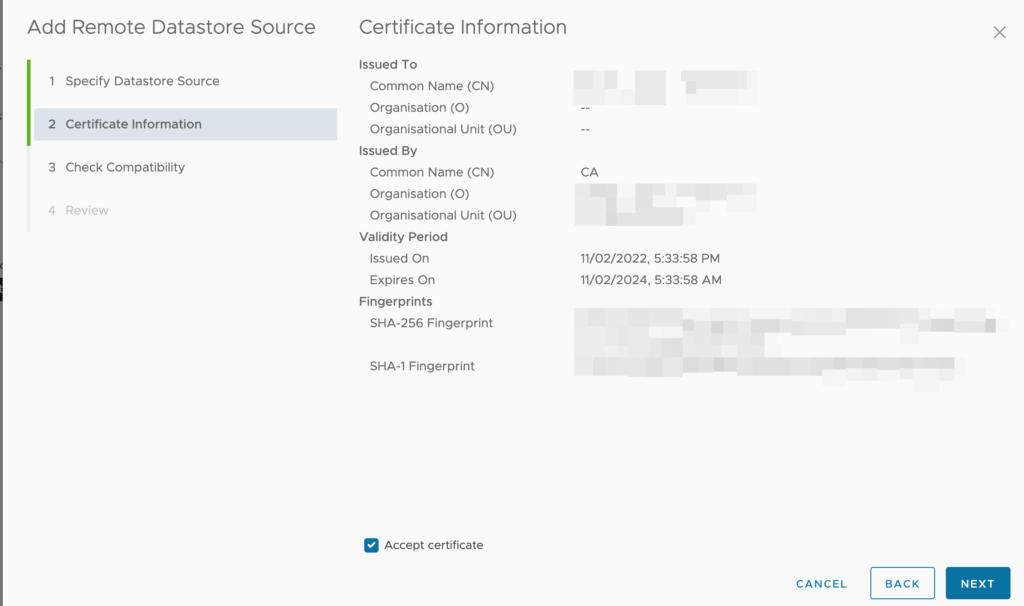

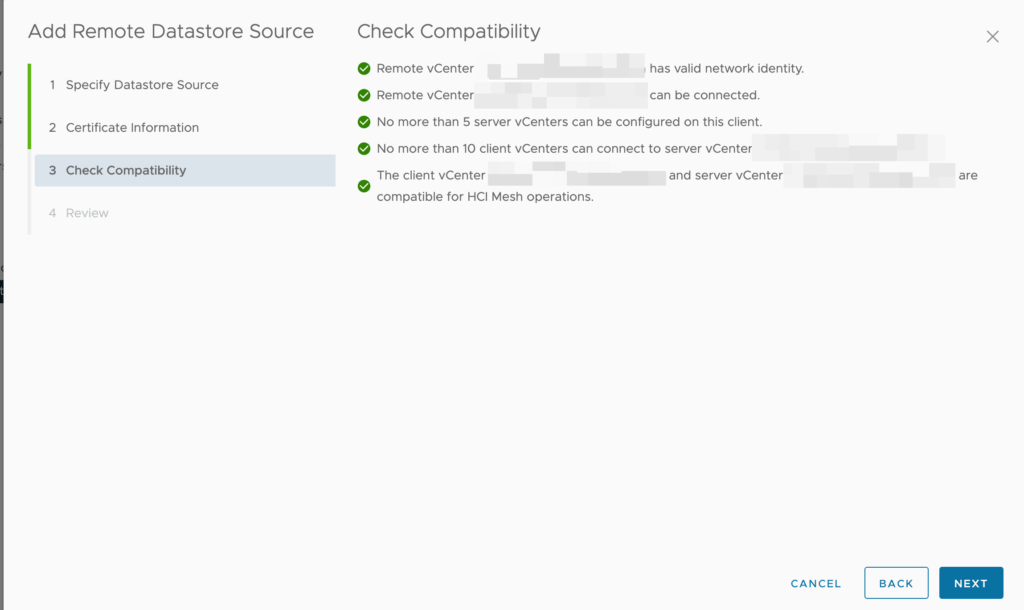

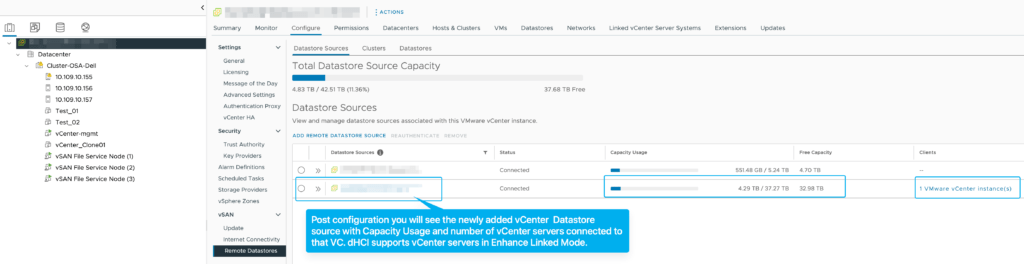

Cross vCenter Sever consumption of remote vSAN Datastore

We now have support for mounting remote vSAN Datastore (dHCI) across one or more vCenter servers for vSAN clusters running Original Storage Architecture.All existing workflows and pre-checks have been updated to accommodate disaggregation connectivity across vCenter Servers. To support such connections, it shall be assumed that participating clusters are running vSAN 8 U1 or greater, with all participating vCenter Servers running the very latest version. Mounting remote ESA vSAN Datastore cross vcenter functionality is yet not supported in this release.

Scaling limitation additions when using disaggregation across vCenter Servers. A single client vCenter can consume up to 5 server vCenter Servers for the purpose of serving datastore sources. A single server vCenter can be consumed as datastore sources by up to 10 client vCenter Servers, this very similar to the limitations within the same vCenter server.

Attempting to add an ESA datastore from a remote vCenter server will will Compatibility check while adding a remote datastore at the cluster level. If the Client Cluster and Server cluster are running different ESXi versions or On-Disk-Format version Compatibility checks will prevent mounting of Remote Datastore.